There have been significant developments regarding Mamba (SSM) architectures in recent days. In summary:

-

Carnegie Mellon, Princeton, Cartesia AI and Together AI have released Mamba‑3, a new SSM that prioritizes inference efficiency and expressive state evolution rather than pure training‑speed wins like Mamba‑2. Mamba‑3 introduces a more general recurrence via an exponential‑trapezoidal discretization scheme, complex‑valued SSM states, and MIMO (multi‑input, multi‑output) SSMs to boost accuracy without slowing decoding, and it already outperforms Mamba‑2 and strong linear‑attention baselines on language‑modeling tasks.

-

Nemotron‑3 Super uses a hybrid Mamba‑Transformer MoE stack: Mamba‑style State‑Space‑Model (SSM) layers are interleaved with Transformer blocks to combine linear‑time sequence processing with strong long‑range attention and in‑context reasoning.

-

Across several labs and industry partners, the near‑term roadmap emphasizes larger Mamba‑scale models (10B–100B parameters), better Mamba‑Transformer hybrids tuned per domain, and edge‑ready deployments—all leveraging the fact that Mamba‑based SSMs can process long sequences at roughly linear time and lower memory cost than vanilla Transformers.

The core concept of Autoresearch gained widespread acceptance very quickly, becoming one of the pioneering ideas for taking the first step toward self-improvement, even Karpathy offered such an entry in his repo:

"One day, frontier AI research used to be done by meat computers in between eating, sleeping, having other fun, and synchronizing once in a while using sound wave interconnect in the ritual of "group meeting". That era is long gone. Research is now entirely the domain of autonomous swarms of AI agents running across compute cluster megastructures in the skies. The agents claim that we are now in the 10,205th generation of the code base, in any case no one could tell if that's right or wrong as the "code" is now a self-modifying binary that has grown beyond human comprehension. This repo is the story of how it all began. -@karpathy, March 2026."

The result is not a giant training stack or an overengineered platform. It is a tight experimental loop that an agent can actually drive.

What I wanted was the same basic setup, but for the family of Mamba (State Space Models) architectures.

Why Mamba

Still, most of the early autoresearch experiments naturally centered on GPT / nanoGPT architectures. But Mamba is interesting for a different set of reasons.

State space models have become especially compelling in long-sequence settings, inference-sensitive workloads and places where sequence efficiency matters more than simply reusing the same transformer recipe forever.

If the point of autoresearch is to test whether an agent can improve a model recipe under a fixed budget, then it makes sense to ask the same question on top of Mamba as well.

Can an agent iteratively improve a Mamba training recipe under the same hard constraints Karpathy used for GPT?

That is what this repo is for.

What The Repo Actually Is

autoresearch-mamba is deliberately small. The structure mirrors the same discipline that makes Karpathy's setup useful:

prepare_mlx_mamba_3.py: fixed architecture-aware MLX prep entry pointtrain_mamba_3_mlx.py: the main architecture-aware MLX training surface for bothmamba-2andmamba-3train_hybrid_moe_mlx.py: hybrid Mamba-Transformer MoE training surface (Nemotron-H style)program.md: instructions for the agent loopprepare.pyandtrain_mamba.py: a secondary PyTorch/CUDA pathanalysis.ipynb: a notebook for analyzingresults.tsvand plotting progress over time

The canonical path is Apple Silicon + MLX. The CUDA/PyTorch path exists as a secondary reference implementation.

Architecturally, the repo now supports Mamba-2, Mamba-3, and hybrid Mamba-Transformer MoE through the MLX entry paths. The Mamba-3 side is aimed at training-oriented autoresearch rather than reproducing every upstream fused kernel implementation detail from mamba_ssm. The hybrid side adapts the Nemotron-H block-level hybridization pattern for single-file MLX training.

The point is to keep a compact, agent-friendly research harness that preserves the core block logic while staying small enough to iterate on.

Hybrid Mamba-Transformer MoE

The latest addition to the repo is a hybrid architecture that interleaves Mamba SSM layers with self-attention and Mixture-of-Experts, following NVIDIA's Nemotron-H design.

The key insight from Nemotron-H is block-level hybridization: instead of each layer being a full transformer block (attention + FFN), every layer is one standalone residual block of a specific type. A pattern string like ME*EM encodes the entire architecture:

M= Mamba SSM block (sequence mixing via state space dynamics)E= MoE FFN block (per-token transformation via routed experts)*= Self-Attention block (global sequence mixing)-= Dense MLP block

Mamba layers handle efficient sequence processing. MoE layers provide per-token capacity with sparse activation. Attention layers add global context at key positions. The ME pair functions like one logical transformer layer: Mamba does the mixing, MoE does the feed-forward. But because each is a separate residual block, the pattern is fully composable.

The implementation (train_hybrid_moe_mlx.py) is a single-file, self-contained MLX script. It includes CausalSelfAttention with grouped-query attention and RoPE, MoELayer with top-k softmax routing and Switch Transformer style load-balancing loss, and HybridResidualBlock which wraps any block type in a uniform pre-norm + residual interface. The Mamba blocks are reused directly from the existing Mamba-2/Mamba-3 code.

With the local preset (ME*EM, 4 experts, top-2, d_model=256), the model trains at ~29K tokens/sec on Apple Silicon, producing a 4.7M param model (3.9M active per token) with a val_bpb of 2.059 in five minutes.

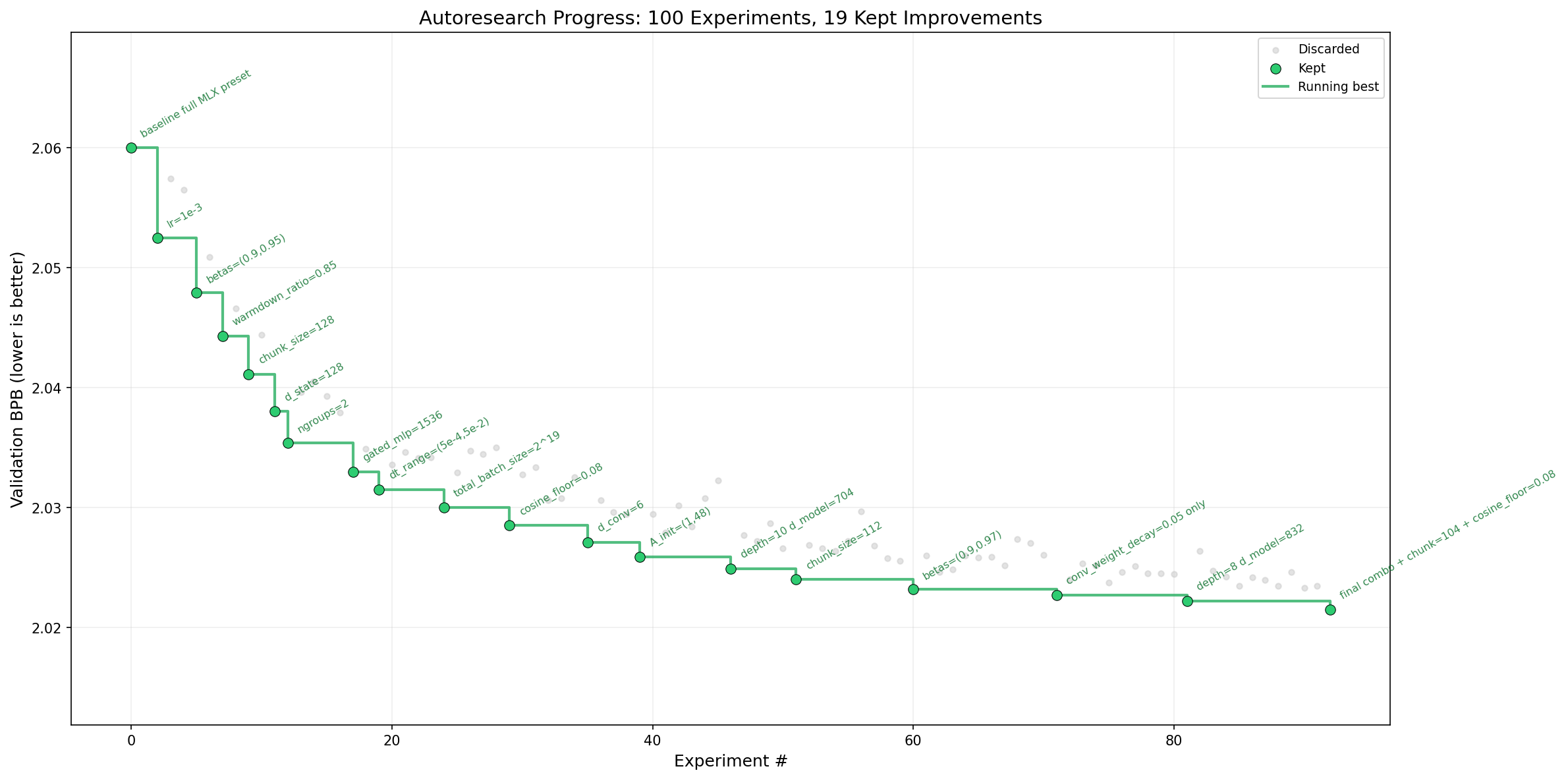

The Autoresearch Loop

The loop is intentionally simple:

- Prepare data and tokenizer once.

- Run training for a fixed

TIME_BUDGET = 300seconds. - Evaluate using

val_bpb. - Keep the change if

val_bpbimproved. - Otherwise discard it and move on.

That five-minute budget matters. It forces the question to become: what is the best model and training recipe an agent can discover on this hardware in this exact amount of time?

It also keeps the search honest. The agent cannot win by quietly changing the evaluator, expanding the benchmark, or redefining success after the fact. It has one target: lower val_bpb under the fixed harness.

Why val_bpb

The metric here is validation bits per byte (val_bpb). Lower is better.

I like this choice for the same reason Karpathy did: it stays tied to language modeling performance without becoming too tokenization-specific. It is not a GPT metric. It is not a transformer metric. It works just as well for a Mamba autoregressive language model.

One subtle but important point: in a fixed five-minute autoresearch loop, the final val_bpb is not just about the model. It is also about how much optimization progress your hardware can fit into the time budget. So comparisons are meaningful when the setup is held constant: same evaluator, same preset, same platform class.

MLX, GPU, And The Local Preset

One of the practical constraints here was that I wanted this to work not only as a theoretical repo, but as something I could actually test on Apple Silicon.

So the repo has two practical operating modes:

- the built-in default MLX path, which can target either

mamba-2ormamba-3 - an optional local preset path for smaller-memory local testing

That local preset workflow turned out to be important. It let me validate the loop end-to-end on constrained Apple Silicon while keeping the default architecture-aware configuration intact as the canonical tracked setup.

MLX-specific detail, briefly: the canonical local path is prepare_mlx_mamba_3.py + train_mamba_3_mlx.py, the same path can switch between mamba-2 and mamba-3, and the smaller local preset flow makes Apple Silicon smoke testing practical. In the current state of the repo, Mamba-3 SISO runs end-to-end locally, while larger Mamba-3 MIMO runs are mainly bounded by Metal memory budget rather than by a missing MLX code path.

In other words: the repo is not just "MLX-compatible" in theory. It is set up so the autoresearch loop can actually run locally, collect results.tsv, and generate a progress plot without changing the underlying philosophy.

What I Wanted To Preserve From Karpathy's Repo

There are a lot of ways to turn "autonomous research" into fluff. The thing I wanted to preserve from Karpathy's setup was not the vibe. It was the discipline:

- one fixed evaluator

- one main mutable training file

- one fixed time budget

- one ground-truth metric

- one keep/discard loop

That design is what makes the repo useful. Without those constraints, you do not really have autoresearch. You just have an agent editing files until the story sounds good.

What Ships Today

The release includes:

- an architecture-aware MLX autoresearch path for

mamba-2andmamba-3 - a hybrid Mamba-Transformer MoE training path (Nemotron-H style,

train_hybrid_moe_mlx.py) - a training-oriented MLX Mamba-3 path with SISO and MIMO branches

- the secondary PyTorch/CUDA reference path

- a documented README and

program.mdcovering all three architectures - local MLX preset workflows for smaller Apple Silicon testing (pure Mamba and hybrid)

- an analysis notebook for plotting progress from

results.tsv

The repo is public here:

Wrapping up

The repo covers Mamba-2, Mamba-3, and hybrid Mamba-Transformer MoE in the MLX autoresearch path. The important part is in place: the loop itself — a compact, testable autoresearch harness for Mamba and hybrid architectures, with MLX as a first-class path instead of an afterthought.

That was the goal.