The problem with starting cold

But there's a fundamental gap. Every RLM run starts completely fresh. The model has no memory of what worked before, what failed, or which strategies suit which problem types. Run the same category of problem ten times and it'll make the same mistakes on the tenth run that it made on the first. All that execution experience just disappears.

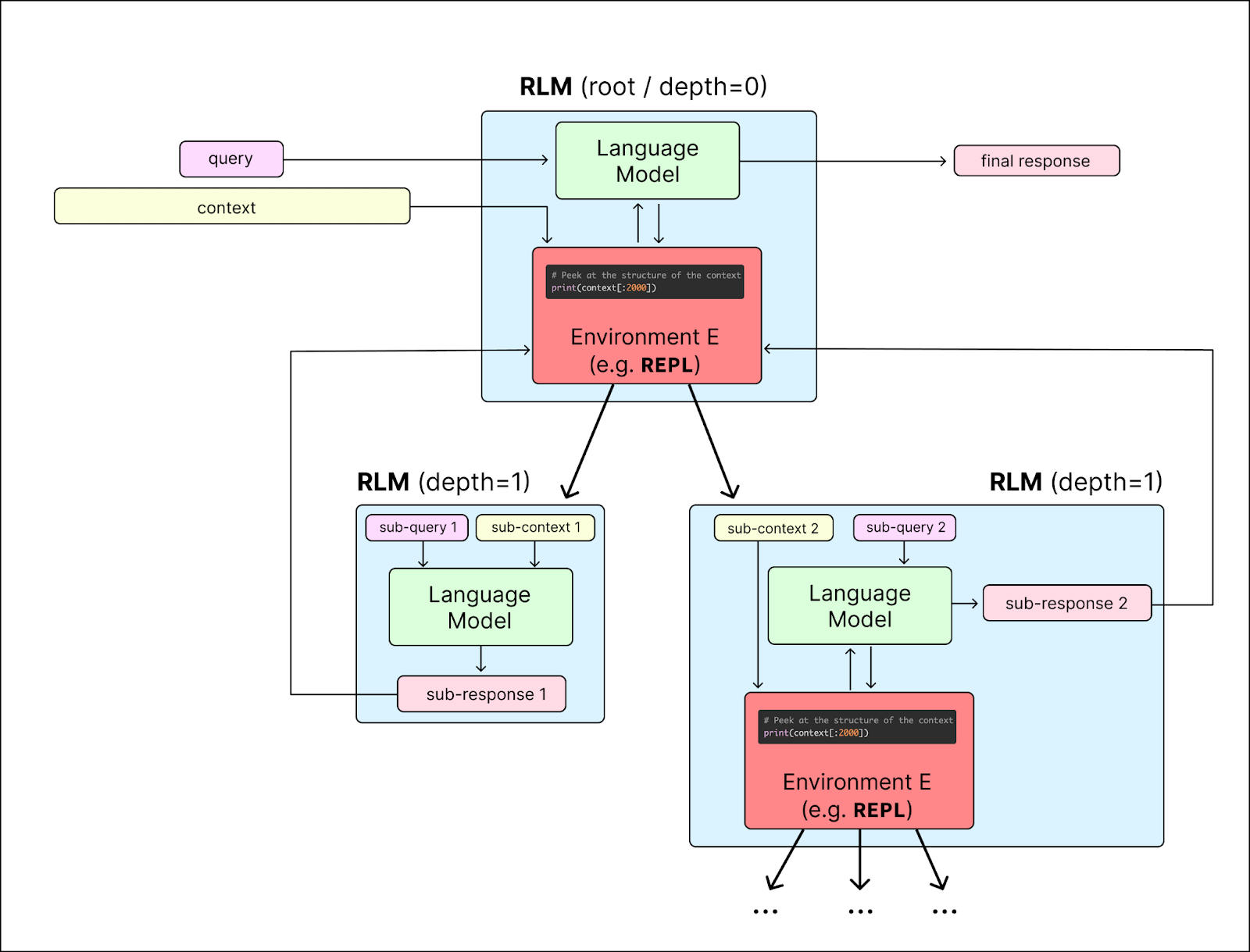

How it works

Mem-RLM operates on three timescales that run concurrently during inference:

Fast — standard RLM execution. Every iteration, the model writes code, executes it in the REPL, and reads the output. This is unchanged from base RLM, with one addition: if a strategy was selected, it's already been injected into the system prompt before the first iteration starts.

Medium — trajectory recording and evaluation. After each completion, the full run gets recorded: the prompt, the model's response, every iteration of code and output, token counts, timing, and whether errors occurred. An evaluator (a separate LLM call) scores the trajectory on a 0-1 scale based on correctness and execution quality. Strategy ratings update in real-time with a weighted running average.

Slow — strategy extraction. After enough trajectories accumulate for a given problem type, the system analyzes patterns across successful and failed runs, then generates new reusable strategies. These aren't generic advice like "plan before coding" — they're concrete tactics extracted from what actually worked, like "compute each intermediate result in a separate variable, print it to verify correctness, then use it in the next step."

The selection mechanism is epsilon-greedy: 90% of the time it picks the highest-scoring strategy for the current problem type, 10% of the time it explores a random one. Strategies that consistently underperform get deactivated automatically.

What it looks like in practice

pythonfrom memrlm import MemRLM

mem = MemRLM(

backend="openai",

backend_kwargs={"model_name": "gpt-4.1-mini"},

environment="local",

environment_tag="math",

auto_evaluate=True,

)

result = mem.completion("What is the sum of the first 100 prime numbers?")

print(result.response)

# The sum of the first 100 prime numbers is 24133.

First run starts cold — no strategies available, pure RLM. But the trajectory gets recorded and scored. By the third or fourth run on similar problems, the system has extracted patterns from what worked and starts injecting them. The model stops repeating the same mistakes.

You can also seed strategies manually if you already know what works:

pythonmem.register_strategy(

environment_tag="math",

name="direct_compute",

env_tip=(

"When solving math problems:\n"

"1. Parse the problem into variables\n"

"2. Compute the answer directly in Python\n"

"3. Assign the result and use FINAL_VAR()"

),

)

Benchmark results

I ran a 10-problem benchmark across math, combinatorics, algorithms, linear algebra, and graph theory — then ran it multiple rounds to see if strategy accumulation actually helps.

GPT-4.1-nano (weaker model — benefits most from guidance):

| Run | Score | Avg Iterations | Errors |

|---|---|---|---|

| Raw RLM (baseline) | 0.450 | 11.0 | 6 |

| Mem-RLM Round 1 | 0.410 | 11.4 | 6 |

| Mem-RLM Round 2 | 0.470 | 11.9 | 3 |

| Mem-RLM Round 3 | 0.565 | 13.8 | 4 |

+26% accuracy improvement by Round 3. The first round is roughly baseline since there are no strategies yet, but by the third round the accumulated guidance starts making a real difference.

GPT-4.1-mini (stronger model):

| Run | Score | Avg Iterations | Errors |

|---|---|---|---|

| Raw RLM (baseline) | 0.855 | 6.0 | 1 |

| Mem-RLM Round 1 | 0.720 | 5.2 | 2 |

| Mem-RLM Round 2 | 0.925 | 4.9 | 1 |

| Mem-RLM Round 3 | 0.860 | 5.4 | 2 |

+8% at peak. Mini is already strong so the baseline is high, but the system still finds room to improve — and does it while using fewer iterations and fewer tokens than baseline.

The takeaway: Mem-RLM helps most when the base model struggles. Weaker models have more to gain from accumulated strategy guidance, which makes sense — if you already get 85% right, there's less room to improve than if you're at 45%.

What's under the hood

Everything persists in a database — SQLite by default, but it supports PostgreSQL and MySQL for production use. The data model is simple: trajectories (raw execution records), strategies (learned patterns), and run records (links between them).

Strategy scoring uses a weighted running average with exponential decay toward recent scores. A strategy that worked well historically but started failing recently will drop faster than a simple average would show. Strategies that fall below 0.25 average score after 5+ uses get automatically deactivated, but they're still eligible during exploration to see if conditions have changed.

The evaluator is a separate LLM call that sees the full picture — the original prompt, the model's final response, the actual stdout/stderr from the REPL, and execution stats. No regex heuristics, no hardcoded rules. It decides if the answer is correct and scores accordingly.

Why this matters

RLMs are one of the more interesting inference paradigms to come out recently — giving models a real execution environment changes what they can do. But stateless inference means every run is independent, and that's a waste. Models encounter the same problem types repeatedly and there's no mechanism to carry forward what they've learned.

Mem-RLM makes RLM inference stateful. Over time, the system builds a repertoire of strategies tuned to specific problem domains. Math problems get math strategies, graph problems get graph strategies, and the model stops rediscovering the same approaches from scratch every time.

This is open source and designed to be a drop-in wrapper — if you're already using RLM, switching to Mem-RLM is a one-line change.